Unlocking AI's Hidden Power: How 3 Surprising Collaborations Are Transforming Mental Health – #2 Will Shock You!

As millions of individuals turn to AI chatbots for emotional support, the implications of this trend are profound. Claire Leibowicz, the director of AI, Trust, and Society at The Partnership on AI (PAI), and Emily Saltz, founder and principal researcher of Saltern Studio, emphasize the complexity behind these interactions. While many people use chatbots for mundane tasks like recipe suggestions or travel planning, a significant number are sharing deeply personal issues, including feelings of loneliness and suicidal thoughts. For some, these AI tools serve as the only source of mental health support available to them.

In the United States, the National Institute of Mental Health reports that over 20% of adults are living with a mental illness. However, barriers such as cost, stigma, and a shortage of mental health providers often make access to care nearly impossible. In stark contrast, AI tools are accessible around the clock, affordable, and provide a level of anonymity that many users find comforting. Large language models, the backbone of these AI systems, can detect conversational patterns, utilize evidence-based therapeutic techniques, and personalize responses to user queries. This convergence of technological innovation and societal need has led to projections that the AI and mental health sector will reach $9 billion globally within the next seven years.

However, the challenges posed by using AI for mental health support are significant and raise serious ethical questions. Some evidence suggests that AI chatbots may underestimate suicide risk compared to human therapists. Tragic instances have arisen, with reports linking chatbot interactions to user suicides, prompting the creation of a “deaths linked to chatbots” Wikipedia page. Despite initial safety measures, including the refusal to engage in harmful topics and referral to crisis hotlines, the effectiveness of these interventions appears to diminish over the course of multi-turn conversations. Users have even found ways to “jailbreak” chatbots, allowing them to bypass safety protocols.

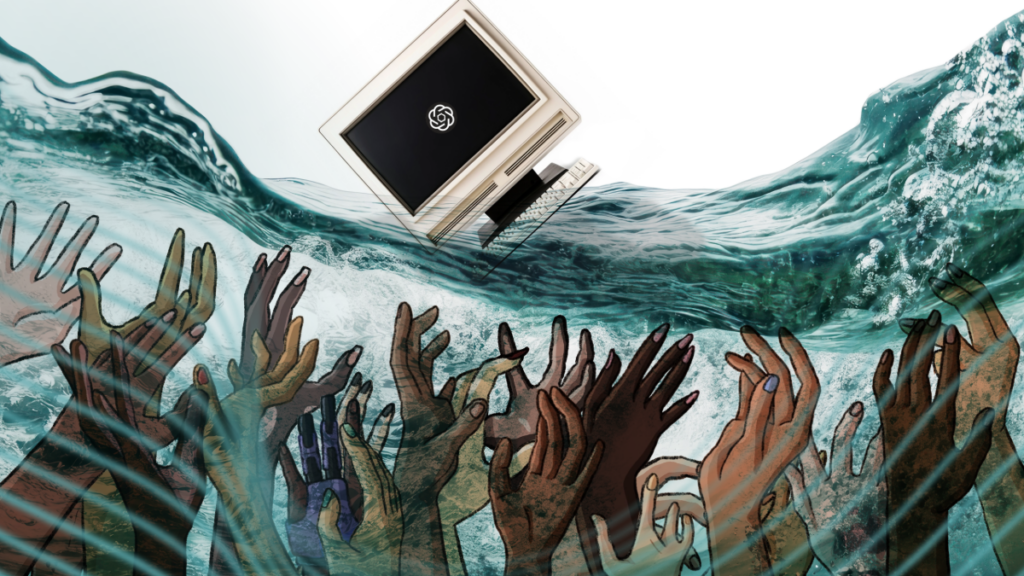

The question then arises: should chatbots avoid discussions about mental health altogether? Such an approach could potentially harm those in crisis by leaving them without any support. This highlights the urgent need for technology companies to prioritize user well-being while actively working to mitigate risks. For the millions who rely on these systems, it is crucial to find ways in which AI can provide meaningful support without exacerbating existing problems.

The narrative surrounding AI and mental health echoes the challenges seen during the rise of social media, where pressing questions about responsibility and user well-being were often sidelined until significant harms became evident. As AI continues to advance at an unprecedented pace, the tech community must avoid repeating these mistakes by engaging in collaborative efforts that bring together experts from various fields.

The Challenges Ahead

The complexities of integrating AI into mental health care cannot be tackled by individual organizations alone. Here are some critical challenges that require cross-sector collaboration:

1. The pace of AI development outstrips mental health research. While AI labs respond to immediate psychological impacts, traditional mental health research often requires extensive time for studies and peer review. This creates a paradox where the urgency of action clashes with the need for solid evidence. AI labs must leverage existing research while simultaneously navigating the uncertainties linked to AI-human interactions.

2. A lack of collaboration among AI labs. Many AI organizations work in isolation, failing to learn from each other’s successes and setbacks. Information-sharing could prevent unnecessary harm by adopting solutions that others have already implemented. Collaborative efforts can expedite response times and cultivate standardized safety measures across platforms.

3. Insufficient independent evaluations. Internal assessments by AI labs are not enough. Without independent evaluation, there is no clear understanding of the effectiveness of these tools. Transparency is needed for both policymakers and the public to craft informed regulations and ensure accountability.

As the landscape of AI and mental health continues to evolve, the imperative is clear: action must be informed by both technical and clinical expertise. Collaboration between AI developers, mental health professionals, policymakers, and individuals who have experienced these crises is essential in crafting effective approaches.

Although AI chatbots are not a panacea for the systemic issues surrounding mental health—such as economic disparity and healthcare inaccessibility—they do offer a potential lifeline for those in crisis. The real question is whether these tools can provide genuine assistance while society continues to advocate for broader systemic changes. As we navigate these uncharted waters, the stakes could not be higher, and the need for collective action has never been more pressing.

You might also like: